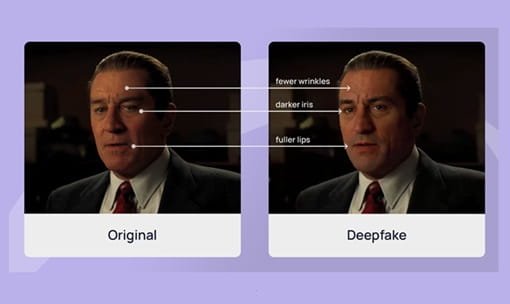

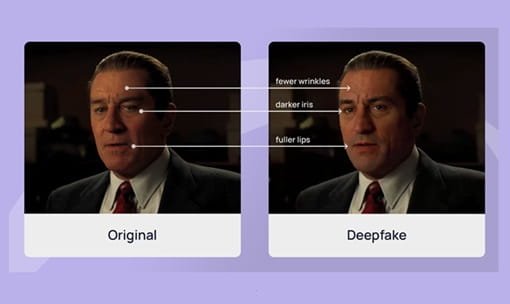

Deepfakes are AI-generated or AI-manipulated videos, images, or audio recordings in which a person’s likeness or voice is synthetically replicated to create false representations of events or statements that never occurred. The technology has advanced from a niche academic curiosity to a widely accessible tool within a few years — and with it have come serious harms: non-consensual intimate imagery, financial fraud, political misinformation, and reputational destruction. India has responded with increasing legislative urgency. A landmark IT Rules amendment in February 2026 created India’s first formal framework specifically targeting deepfakes and AI-generated harmful content.

The Short Answer: Deepfakes Are Not All Illegal — But Harmful Deepfakes Are

Creating and sharing deepfakes is not universally illegal in India. Certain deepfakes are entirely legal: satire, parody, clearly labelled fictional content, educational demonstrations, accessibility tools (text-to-speech), artistic expression that does not mislead. Films, entertainment, and digital art regularly use AI-generated visual effects and deepfake-adjacent technologies legally.

What is illegal is creating, hosting, sharing, or disseminating deepfakes that: impersonate real individuals to deceive; create non-consensual intimate or sexual imagery of identifiable persons; spread fabricated political or electoral information; forge documents; facilitate financial fraud; violate privacy; or harm reputation. The IT Act, BNS 2023, DPDP Act 2023, and the new IT Rules 2026 collectively address these harms.

The IT Rules 2026: India’s First Formal Deepfake Framework

On February 10, 2026, MeitY (Ministry of Electronics and Information Technology) notified the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2026, effective from February 20, 2026. This is India’s first explicit statutory framework addressing AI-generated content including deepfakes.

Key elements: the rules formally define ‘Synthetically Generated Information’ (SGI) as AI-created or AI-modified audio, visual, or audio-visual content that creates false representations of real events or people; platforms must display visible labels on AI-generated content and embed digital provenance metadata; a 3-hour takedown deadline applies to harmful AI-generated content flagged by government or courts (reduced from the previous 36 hours); a 2-hour deadline applies for AI-generated non-consensual intimate imagery; platforms that fail these obligations lose their Section 79 IT Act safe harbour protection and face liability for user-generated harmful content.

The rules also require platforms enabling SGI creation (generative AI tools, video editing apps, voice cloning services) to warn users against creating prohibited categories and design interfaces that do not facilitate harmful deepfake creation.

Legal Provisions Criminalising Harmful Deepfakes

Even before the 2026 rules, multiple existing laws criminalised deepfake-related harms. Under the IT Act, 2000: Section 66C — identity theft through fraudulent impersonation — up to 3 years imprisonment and Rs 1 lakh fine; Section 66D — cheating by impersonation — same penalties; Section 66E — non-consensual privacy violations including intimate deepfakes — up to 3 years imprisonment and minimum Rs 2 lakh fine; Section 67 — obscene content — up to 3 years and Rs 5 lakh fine; Section 67A — sexually explicit content — up to 5 years and Rs 10 lakh fine.

Under the BNS, 2023: Section 111 — organised cybercrime involving coordinated deepfake campaigns; Section 212 — furnishing false information through synthetic content; Section 353 — statements causing public mischief. Under the DPDP Act 2023: deepfakes processing biometric data (face, voice) without consent can attract fines up to Rs 250 crore.

Under POCSO Act: deepfake sexual content involving minors carries the most severe penalties — mandatory prosecution with stringent imprisonment terms.

High-Profile Cases: Enforcement Has Begun

Since 2023, Indian courts and law enforcement have responded to deepfake harms with urgency. The Rashmika Mandanna deepfake video of 2023 — a non-consensual intimate deepfake that went viral — prompted Prime Minister Modi to publicly call it a crisis and spurred accelerated regulatory action. The Delhi High Court issued rapid protective orders, and content was taken down across platforms.

In the Sadhguru Jagadish Vasudev case (CS COMM 578/2025, Delhi High Court, May 2025), Justice Saurabh Banerjee issued a landmark ruling on personality rights protection against AI misuse, establishing that public figures have a right to protection of their likeness against AI-generated impersonation.

In 2025, police in Navsari arrested a man sharing a deepfake of Prime Minister Modi within 24 hours of the complaint. High Courts have ordered content removal within 12–18 hours in urgent intimate-image deepfake cases, demonstrating the judicial system’s responsiveness to the technology’s harms.

What Users and Platforms Must Do Under IT Rules 2026

For individual users: do not create deepfakes that impersonate real people without consent; do not create or share non-consensual intimate deepfakes; do not create political deepfakes designed to deceive voters; always clearly label AI-generated or AI-manipulated content as such. Legitimate satire and clearly labelled parody remain permissible.

For platforms and intermediaries: comply with the 3-hour (general) and 2-hour (intimate images) takedown timelines; deploy technical tools to identify and block prohibited SGI; embed labelling and metadata requirements in product design; inform users of SGI rules at least once every three months; maintain round-the-clock monitoring systems; disclose the identity of deepfake creators to police when legally requested.

Final Thought

Deepfakes are not categorically illegal in India — but harmful deepfakes most certainly are, and the legal consequences are severe. The IT Rules 2026 create India’s first formal deepfake regulatory framework with 3-hour takedown mandates and platform accountability. Multiple existing laws — IT Act, BNS, DPDP Act — provide criminal liability for impersonation, intimate deepfakes, fraud, and public mischief. If you are a victim of a deepfake, act immediately: save evidence, file at cybercrime.gov.in, seek a High Court takedown order for urgent cases. The legal system is responding to the deepfake crisis with increasing speed and effectiveness.

Frequently Asked Questions (FAQs)

Q1. Is creating a deepfake always illegal in India?

No. Creating a deepfake is not categorically illegal. Satire, parody, clearly labelled fictional or artistic content, educational demonstrations, and accessibility tools are legal. What is illegal is creating deepfakes that impersonate real people to deceive, create non-consensual intimate imagery, spread fabricated political content, or facilitate fraud. The IT Rules 2026 require all AI-generated content to be clearly labelled.

Q2. What law applies to non-consensual intimate deepfakes?

Non-consensual intimate deepfakes are criminalised under IT Act Section 66E (privacy violation — up to 3 years imprisonment and Rs 2 lakh fine) and Sections 67/67A (obscene/sexually explicit content — up to 5 years and Rs 10 lakh fine). The IT Rules 2026 require platforms to remove such content within 2 hours of complaint. POCSO applies if the victim is a minor, with the most severe penalties.

Q3. What is the 3-hour takedown rule under IT Rules 2026?

Under the IT Rules 2026 (effective February 20, 2026), platforms must remove harmful AI-generated content (deepfakes, synthetic misinformation, forged documents) within 3 hours of receiving a government or court-ordered takedown notice — reduced from the previous 36-hour window. For AI-generated non-consensual intimate imagery specifically, the deadline is 2 hours. Platforms that miss these deadlines lose their Section 79 IT Act safe harbour protection.

Q4. How do I report a deepfake video targeting me?

Immediately save all evidence — screenshots, URLs, upload dates, and any identifying information. File a complaint at the National Cyber Crime Reporting Portal (cybercrime.gov.in) — this is mandatory for sexual deepfakes. File at your nearest police cyber cell. For urgent cases involving intimate images, approach the High Court for a rapid takedown order — courts have issued orders within 12–18 hours in such cases. NGOs like iCall or the Cyber Peace Foundation can provide support.

Q5. Can public figures sue for deepfakes of themselves?

Yes. Public figures have successfully obtained legal relief against deepfakes of their likeness. The Delhi High Court’s 2025 Sadhguru case established personality rights protection against AI impersonation. Courts have ordered takedowns and damages for reputational harm. The IT Rules 2026, BNS provisions on defamation and public mischief, and the DPDP Act’s biometric data protections all provide legal avenues. Public figures may also seek injunctions preventing future misuse of their likeness.